So, you are probably reading about SAP Hana and Hadoop a lot, both in any possible flavor there is. And why not? Hana is a super-fast and powerful technology that very soon will become a hard requirement for most of SAP’s products. Hadoop is an open source platform for distributed computing of vast amounts of data.

Some say Hadoop is dead due to the financial troubles of the leading companies. I say it’s just heating up for the next round. Plus, there are barely any other options for an on-premises data lake. And there are still many enterprises that invest in this open source technology. They are doing it for a good reason, which I’ll try to outline here.

People are naturally keen on new technology trends. I am too, but sometimes we just go too far too fast. And being keen is also not a real business case and reason to invest.

The idea behind Hadoop

The whole idea behind Hadoop started somewhere in large enterprise IT giants like Google or Yahoo. They developed distributed file systems and computing for their dedicated use-case. And they have been making millions with it for years now.

You may ask: What’s the problem? Well, quite often people think that if a technology worked for other companies it should work for their company too, right? But unfortunately, that’s not the case. There is a big difference between the development of a new technology to execute a strong business use case and implementing the new technology as a use case itself.

If you don’t know how your organization would benefit from technologies like Hadoop, you need to start looking for use cases and preferably for those that would make such a time-consuming implementation worth trying.

Connection between Hana and Hadoop

This is where the connection between Hana and Hadoop starts to make a lot of sense. And it’s easy math. If you want to implement Hana, you can save a lot on downsizing licenses and hardware by keeping some of the data in the Hadoop. This offloading is one of the scenarios we at Datavard manage quite often. Essentially, we analyze how much of the data in the system is being actively used and create offloading strategies for those that aren’t. You would be very surprised how much data in an enterprise SAP system, especially in a business warehouse, is literally never being used.

After migrating data to Hadoop or any other source, your SAP system is ready to migrate to Hana. It’s not only smaller, it’s also secured for future data growth. Of course, you can still consume and change offloaded data as if they were on your old system.

Although this is a very tangible cost-saving case that we recommend, the question remains: Does it make sense to implement Hadoop along with the Hana system? For the average Hana system size, we are talking about a few terabytes while a Hadoop implementation starts at ~100 terabytes for a proof of concept and grows easily to petabytes for “real” clusters. Let’s not forget that there are other possible targets for offloading like standard RDBMS or filesystems.

To answer the feasibility check of the complete Hana and Hadoop solution, we need to dig a bit deeper.

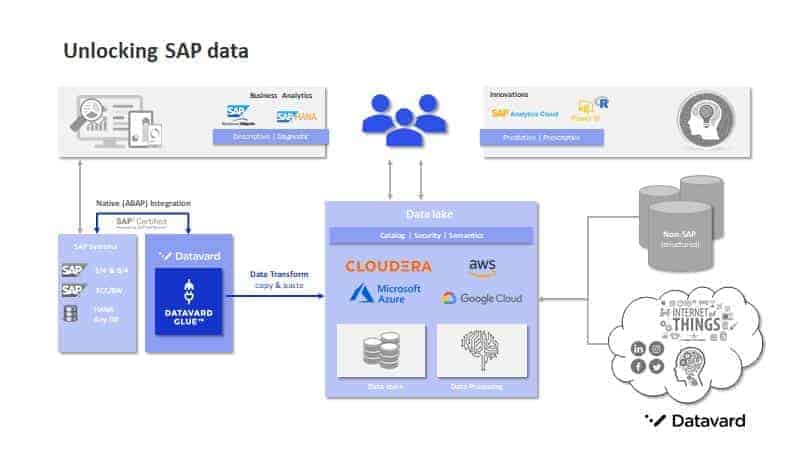

Increasing the value of data with integration

With the integration of SAP and a big data platform, you are inherently receiving a lot of opportunities to increase the value of your data. This is happening due to the combination of intelligence that you have in your business’ essential data and the environment around your business.

Those variables can be, for example, availability and price changes of materials for your production, sentiment analyses data for your marketing strategy, customer-related data for your sales, customer behavior patterns for retail and many more. As long as you are aware of the variables you can effectively gather related data and combine them with the company’s core business data.

To process all those data sources, you need a powerful and scalable platform like Hadoop and lean integration with SAP. Hadoop comes with native components capable to process most of the data sources you will need, but SAP is an exception. This is due to the data model and the application layer. If you simply take data from the underlying database, you will get data that just doesn’t make sense or, even worse, will include personal data or other essential data you should not have access to in the first place.

Consequently, we always recommend a lean tool for integration with SAP. By “lean” we mean that you don’t need to install any other hardware component into your network, it must closely integrate with the SAP application layer, unlock the data-model for data scientists and engineers from the big data world and follow SAP authorization concepts.

If we look back at the advantages of integration – increasing cost savings by decreasing your Hana footprint in combination with options to enhance the value of your data in the Hadoop – we might be able to justify investment into the implementation.

I say ‘we might’ because we are still missing the actual numbers. Productive environment implementations could take years, making it more a program or strategy-oriented decision than just the implementation of a single project.

Based on our experience it’s possible to see the first benefits of offloading and integration after 3 to 6 months. This estimate assumes that you use smart software and an experienced project team. Additionally, it requires that you are not at the beginning of the journey – meaning you have your Hana system and Hadoop platform already up and running and you are actively talking to your company’s business departments about what their business variables are.

Add Comment