The SAP Data Warehouse Cloud (“DWC”) gets pretty close to a plug-and-play solution, per my findings.

As recap, what are the steps to build a data warehouse?

- Explore the source system

- Develop a data model of the data warehouse

- Implement the transformations to turn the raw data into key performance indicators

- The physical movement of the data

- Semantic model in the Business Intelligence tool

- Performance optimizations

- Back to square one, adding more data

There are two extreme options to do that. A very hands-on approach is to copy the source system data 1:1 and do the transformations at query time. The other extreme is to build an Enterprise Data Warehouse with a proper data model from the start, user roles, security, etc. in mind.

Both variants are valid. Often, one project iteration adds new data the simple way and, once the data has proven useful, it is integrated into the official data model. How does the DWC support that?

Data Federation

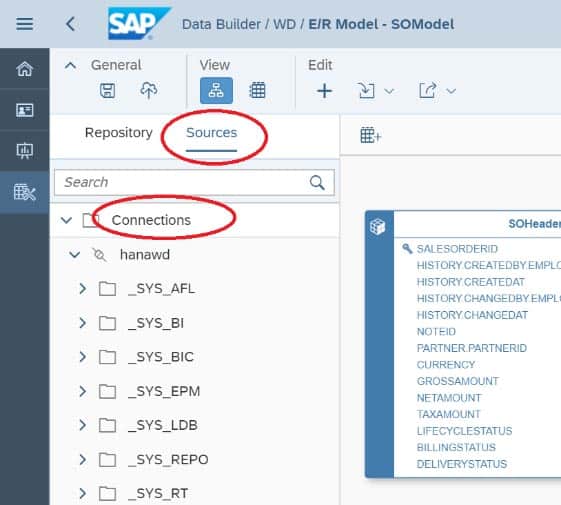

To access on-premise source data, the Hana feature Smart Data Integration (SDI) is used. The SDI Data Provisioning Agent is installed inside the company network and it acts as a bridge between the Hana database, the DWC, and all on-prem systems – the sources.

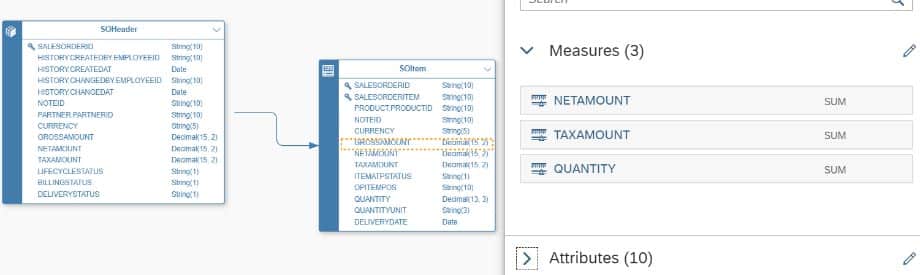

The desired tables are added to the E/R Model, including additional metadata like the business names, relationships and other information to help the user later.

At this stage, the data access is purely virtual – it is a Data Federation approach. If the user executes the query “select sum(revenue) from SOHeader”, the DWC database tries to execute as much as possible in the source system – here the entire query – and only the result is sent back over the network, which would be a single row in this example.

No data replication, no data duplication – the data is up to date by design and new tables are available in DWC within seconds. This is indeed a very powerful data integration technique.

Data Replication

However, Data Federation has downsides, too. Most concern performance. All queries the data warehouse users execute are actually computed at the source. The IT department will not be thrilled about thousands of additional queries compromising the ERP system’s response times.

Even if, the queries can only be as fast as the source system is able to produce the result set plus network transfer times. One of the reasons to build a data warehouse in the first place is to avoid these two problems; otherwise, the Business Intelligence tools could have been connected to the source systems directly. That does not make much sense.

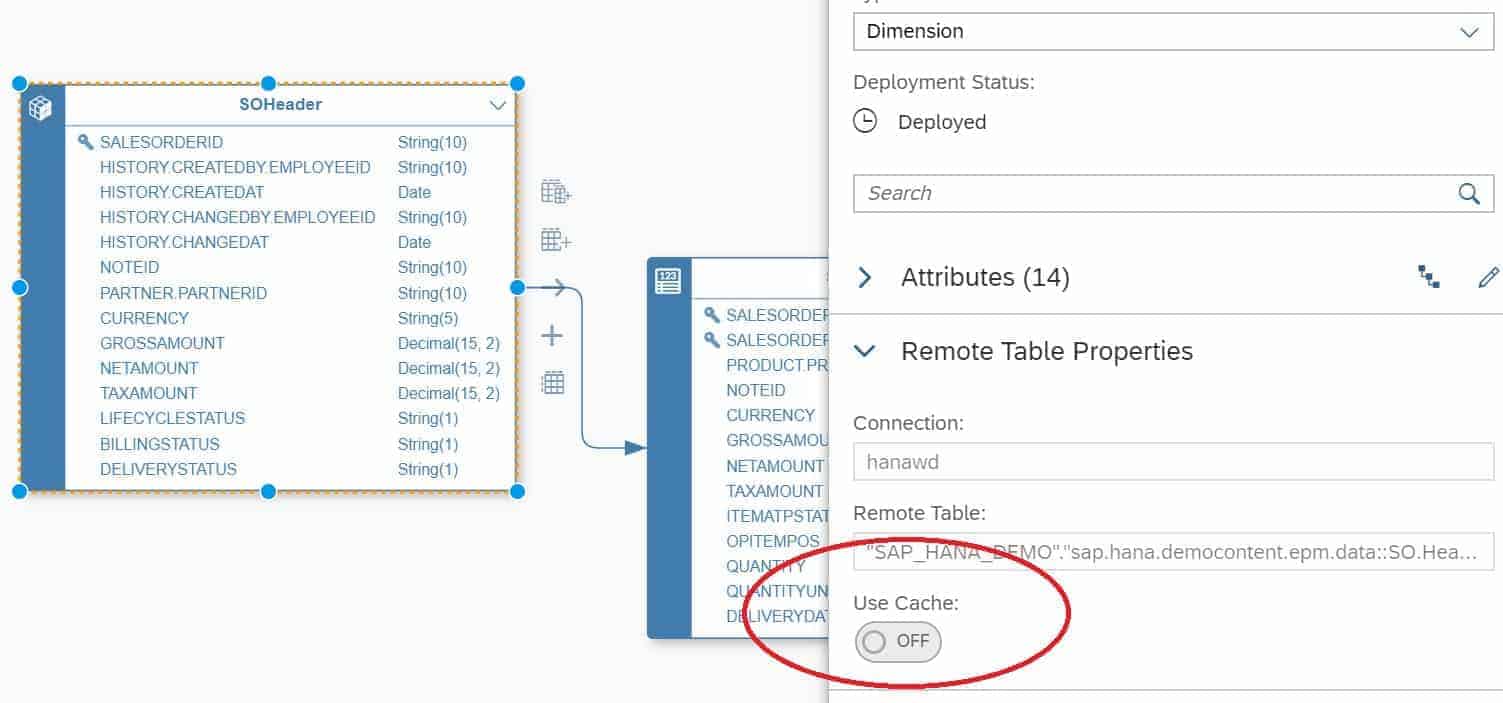

The only solution is to copy the data off the source and into the Hana database. In DWC, this is as simple as turning on the Use Cache flag for the tables.

Then data is copied from the source system just once and all queries the DWC users execute go against the local DWC Hana tables. What is missing is to keep the cached data up to date, though. Without current data, the value of the reports is questionable.

Interestingly, the SDI feature supports that, it just needs to be activated in DWC. To be more precise, when activating the Use Cache feature, a Hana SDI FlowGraph object is generated under the cover to execute the data movement. Enabling real time is a checkbox in the FlowGraph as well. A FlowGraph has even more necessary features, like performing the initial load of the data in multiple parallel chunks.

Transformations I

Once data is available, the next step is transforming the raw data into valid Key Performance Indicators (KPI).

Example: Part of the Sales/Distribution module of the SAP ERP system is the table VBAP with the field NETWR storing the value for each order line. The desired KPI shall be the OrderValue. A cancelled order contributes a value of zero, for a product return the value has to be taken negative. All this knowledge about the internals is unknown to the normal user and therefore should be defined centrally.

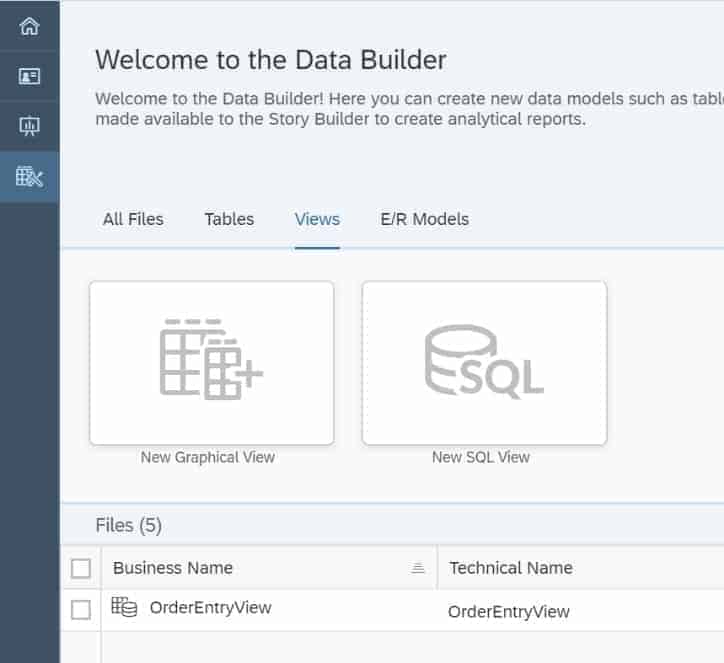

In DWC, just like in Hana, Views are used for that; either Graphical Calculation Views or SQL Views.

Although Hana itself has editors in the WebIDE to build such views, DWC went the extra mile and created a nicer version of that and integrated it into its own UI. I personally think that this is very user friendly. Otherwise, we would need to switch between the different development environments way too often.

Transformations II

This approach – to create virtual tables, set them to caching and then perform the transformations in views – works well for simple cases. In real life, the limitations are felt soon. Some transformations are slow, others simply not possible via SQL.

Again, an example from my past: One table contains all credit-related bookings and the master data table contains the information about monthly repayment, interest rate and terms. The KPI to calculate was the number of months until the current outstanding amount has been repaid. This is certainly not a simple task, and SQL is the wrong language.

But even if we could use SQL for that, why should the user wait for this calculation to complete whenever he executes a query? It does not matter how fast Hana is, it will take minutes or even hours to complete anyway.

The better approach is to move all the calculations that can be done early out of the views and store their values pre-calculated in the table already. Then the table with the sales order values has the NETWR field but also the converted OrderEntryAmount, making it easy for the database to sum up the value. The table with the credit information gets an additional field for the projected life of loan, making it easy for the database to find all loans open greater ten years.

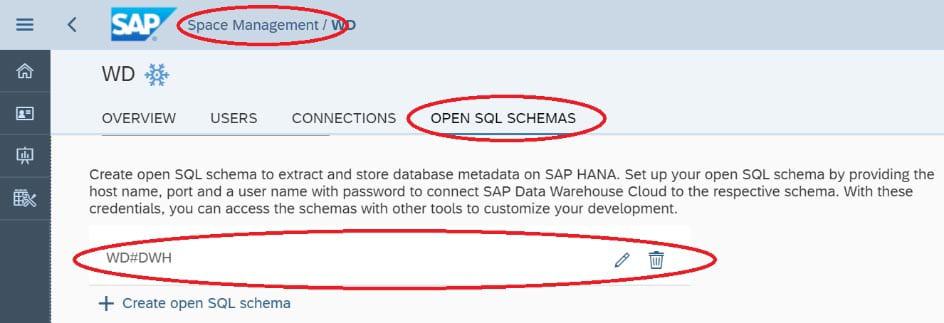

This is the strong suit of ETL Tools. Thankfully, DWC allows to use them. Internally, DWC has two types of database schemas. One is used to create Hana objects via the UI, the other is called “Open SQL Schema” and added via the UI.

This is a normal Hana database schema and therefore any tool can create new tables, load the tables, and so on.

As a result, Data Warehouse Cloud allows me to do simple things via virtual tables and complex tasks via any ETL tool.

Given the fact that the internally used Hana SDI FlowGraphs are meant for data transformations (it is a Hana-based ETL tool, in fact), enabling ETL-like capabilities would be the next logical step. DWC would then support all options plus switching between them.

- Setting the Use-Cache flag creates a FlowGraph object internally.

- Next would be to add a real-time cache flag, which sets the corresponding flag in the FlowGraph.

- The next step would be for the user to move calculations from the views into the FlowGraphs like the OrderEntryAmount calculation.

- And in the final step, the user can edit the generated FlowGraph and add even more complex calculations like the credit projection.

I am curious to see if there will be developments in that direction. SDI itself is architected that way; I made sure of that back in my days at SAP.

Semantic Layer

The final step when building a Data Warehouse is adding semantic information for the Business Intelligence tool. The main reason is to support the end users when building queries and protect them from creating logically wrong queries. In that layer, join conditions between tables are defined, nicer names added, fields classified into measures or attributes and sub types thereof – things like that. This is done in the E/R Model editor of DWC.

One important piece missing now is how to combine measures from different areas. In the end, the real value of Business Intelligence is to combine data for Actual vs Plan comparisons; Order vs Deliveries for the shipping backlog; current year revenue vs previous year. The only option today is to create another view which has both measures. This works but is inflexible, among other things.

To my knowledge, development is working on the part already and it will be built like in its ‘parallel universe’, the SAP Business Objects Information Design Tool.

Verdict from a product manager perspective

A product manager asks questions like “What is the unique selling point?”; “What are the market opportunities?” and “In which direction is the market developing?”

From this point of view, SAP did an excellent job. The unique selling point is certainly the Hana speed. Just recently, I copied all data of one database to Hana and was shocked about the difference in query speeds. It is breathtaking, with simple and complex queries alike. With DWC, the customer gets a Hana database plus help for building a data warehouse solution, all at an attractive price.

The other question to ask is how much the areas feature richness, price point and market requirements overlap. Even the best tool will not be a success if the market needs something else or it is too expensive for the target audience.

A solution like Data Warehouse Cloud helps small customers the most. This is matched by the pay-per-use pricing model and the simplicity for the end users. For large customers, the solution is open, so every fancy task can be done. I doubt large customers will complain about a low price and simple-to-use UIs, either.

Verdict as architect

The user can do the simple and time-consuming tasks easily. For complex requirements, there are enough options to get the job done – in the worst case by using the Open SQL Schema.

Some parts are not there yet but at least the architecture itself does not prevent adding them. If only all products would be built that way. The stories I could tell…

One of the missing pieces is the interaction between the two schemas. The DWC schema can use the Open SQL Schema objects; e.g. when creating a View, the Open SQL Schema objects are visible. The other way around is locked at the moment, however. Hence a table created via the DWC UI cannot be loaded via an ETL tool and the entire data model cannot be queried by e.g. another BI tool. It’s no rocket science to grant the corresponding privileges in the second schema.

Verdict as end user

The end user gets nice-to-use UIs and logical workflows. For real-life projects, some severe limitations apply as of today.

- Without real-time data, the caching on virtual tables cannot be used.

- Without the option to compare KPIs freely, only simple queries are useful.

- Without row-level security, it is limited to small projects.

Per my knowledge, SAP is working on all three areas and frankly, they are easy to solve.

Visualizations by Data Warehouse Cloud

Putting the excitement about the technology aside, what is a data warehouse generally needed for? Purely for turning data into information and to act on this information for the financial benefit of the business. Or, to put it plainly, visualization is key.

And that is my biggest issue with DWC and therefore SAP Analytics Cloud. It is a tool providing yet another way to visualize the data in bar charts and so on, just like any other tool can do as well. Where are charts with more than two dimensions? Today, the requirement is to compare actual vs. previous year revenue per product type, region and distribution channel and show the changes over the time.

Another example: Hana is all about real time. And yet, the diagrams are static. New data should be appended in the diagrams as they appear in the database instead!

We had so many good concepts in this regard, it is well overdue to finally make it happen. Here is a glimpse of what the market provides as visualizations. DWC would deserve some of these.

Competitive situation

The key factors of a Cloud Data Warehouse are how easy it is to get data in; the query response times of state-of-the-art queries; and the visualization options.

To my knowledge, SAP DWC is the only integrated solution that has synergies. Looking at Amazon AWS with the various databases or Snowflake with a purpose build Data Warehouse Cloud solution, both use openness as their main theme. Customers use their ETL tool of choice to get data in. Customers use their BI tool of choice to report on the data. There’s nothing wrong with having that option, but providing solutions for these parts out of the box is even better.

SAP is the only company listed as leader in all required aspects. It is one of the leaders in databases, in data integration and in Business Intelligence. The potential is there, SAP just needs to finally act on it.

Regarding “What is missing is to keep the cached data up to date”, Hana 2 SP5 got that feature finally. It is called “virtual table snapshot”.